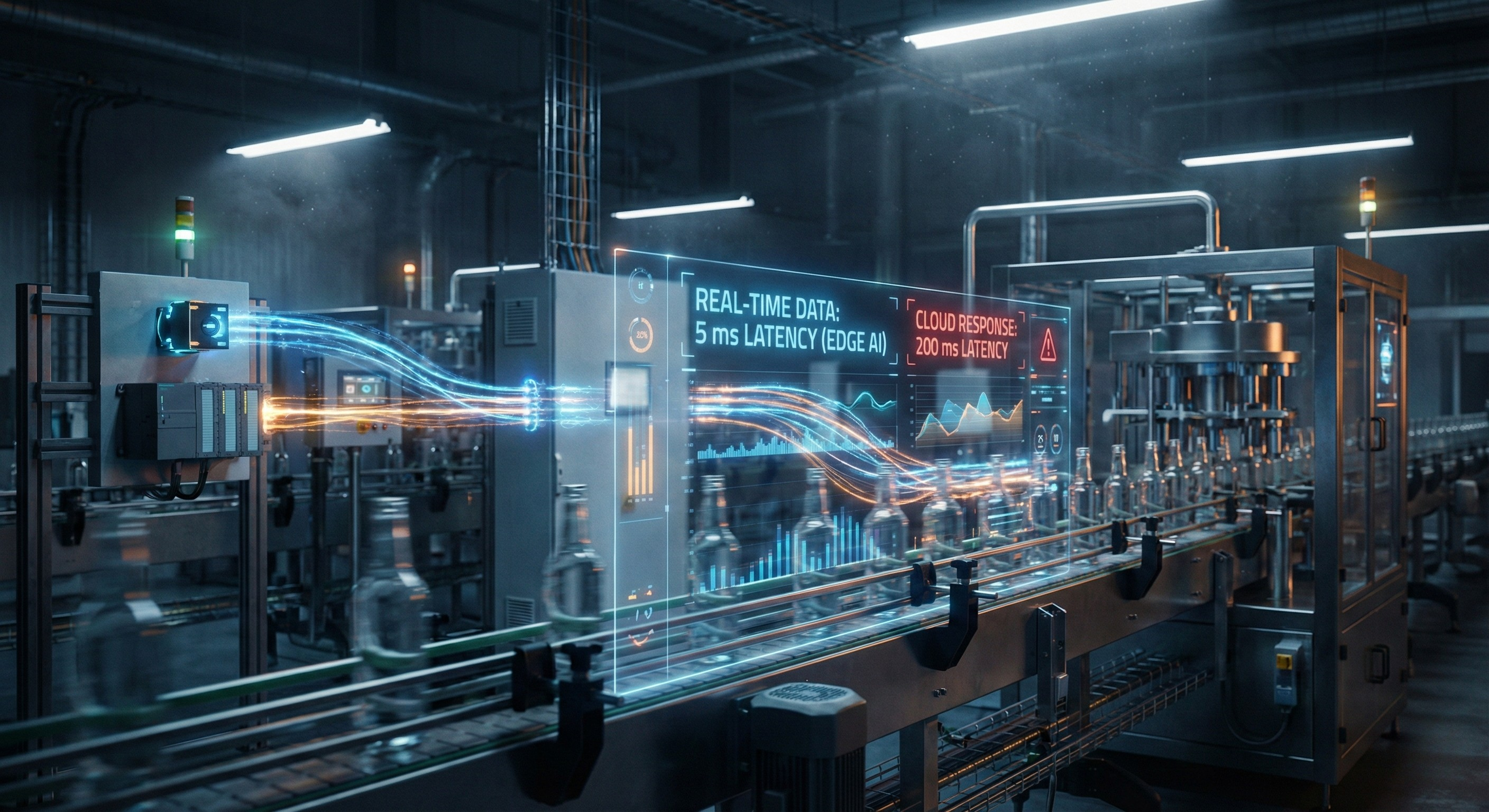

Why latency is the biggest barrier to Industrial AI and why edge computing solves it

Industrial AI promises a fundamental transformation in manufacturing. Machine learning models can detect defects, predict failures, optimize production, and enable autonomous decision making. Yet most industrial AI deployments fail to deliver real operational impact. The primary reason is not model accuracy. It is latency.

Latency determines whether an AI system can act at the speed of the physical process it is monitoring. In manufacturing, milliseconds matter. A delayed decision is often equivalent to no decision at all.

This article explains why latency is the central constraint in industrial AI, why cloud-based architectures introduce unavoidable delays, and why edge computing is the correct architectural foundation for real-time industrial intelligence.

Definition: What is latency in industrial systems

Definition:

Latency is the time delay between an event occurring in a physical system and the AI system responding to it.

Latency includes several components:

-

Sensor acquisition delay

-

Data transmission delay

-

Processing delay

-

Decision execution delay

In industrial environments, latency is typically measured in milliseconds. Even delays of 50 to 200 milliseconds can determine whether an anomaly is prevented or allowed to cause damage.

Latency is not simply a performance metric. It defines whether an AI system can operate as a real-time control layer or merely as a retrospective analytics tool.

Types of latency in industrial AI systems

Sensor latency

Time required for sensors to capture and transmit signals.

Network latency

Time required for data to travel between machines and compute infrastructure.

Inference latency

Time required for AI models to process data and generate predictions.

Control latency

Time required to execute actions such as stopping a machine or adjusting parameters.

The total latency is the sum of these components.

Why latency matters in physical systems vs software systems

Latency affects all computing systems, but its impact is fundamentally different in physical environments.

Software systems tolerate latency

In typical software applications such as web platforms or enterprise dashboards:

-

100 to 500 milliseconds latency is acceptable

-

Delays affect user experience but not physical outcomes

-

Decisions do not need to synchronize with mechanical motion

For example, a delayed recommendation in an e-commerce platform has negligible physical consequences.

Physical systems operate continuously in real time

Manufacturing equipment operates at machine speed. Decisions must align with physical processes that cannot pause while waiting for computation.

Examples:

-

A bottling line fills 1000 bottles per minute

-

A CNC spindle rotates at 20,000 RPM

-

A packaging line processes one unit every 50 milliseconds

If an AI system responds after the critical moment, the opportunity to prevent defects or failures is lost.

This requirement is known as machine-speed decision making.

Real-time systems require deterministic execution

Industrial control systems operate under deterministic constraints.

Deterministic execution means that a system responds within a predictable time window every time.

Cloud-based systems cannot guarantee deterministic latency due to network variability.

Edge systems can.

Real-world examples of latency impact in manufacturing

Latency directly determines whether industrial AI produces measurable operational improvements.

Example 1: Defect detection in high-speed production

Consider a packaging line running at 300 units per minute. Each unit passes inspection in approximately 200 milliseconds.

An AI vision system must:

-

Capture image

-

Process image

-

Identify defect

-

Trigger reject mechanism

If inference latency exceeds the time window before the unit leaves the rejection zone, defective products continue downstream.

Outcome:

-

Low latency enables real-time rejection

-

High latency results in defective products reaching customers

Even 100 milliseconds of additional latency can prevent effective intervention.

Example 2: Machine shutdown prevention

Mechanical failures often begin with subtle signals such as vibration changes or thermal deviations.

These anomalies may escalate within seconds.

An AI system monitoring vibration must detect anomalies and trigger preventive actions before failure occurs.

If the anomaly occurs at time T, and failure occurs at T + 500 milliseconds, an AI system with 300 milliseconds latency can prevent failure. A system with 800 milliseconds latency cannot.

This difference determines whether AI enables prevention or merely post-failure analysis.

Example 3: Micro-stoppage detection

Micro-stoppages are brief interruptions that last from milliseconds to seconds. They often accumulate into significant productivity losses.

Micro-stoppages may occur due to:

-

Sensor misalignment

-

Material inconsistencies

-

Mechanical friction

Real-time detection requires continuous analysis of high-frequency signals.

If latency is too high, micro-stoppages appear invisible because they occur and resolve faster than cloud-based analysis cycles.

Only low-latency systems can detect and respond to micro-stoppages in real time.

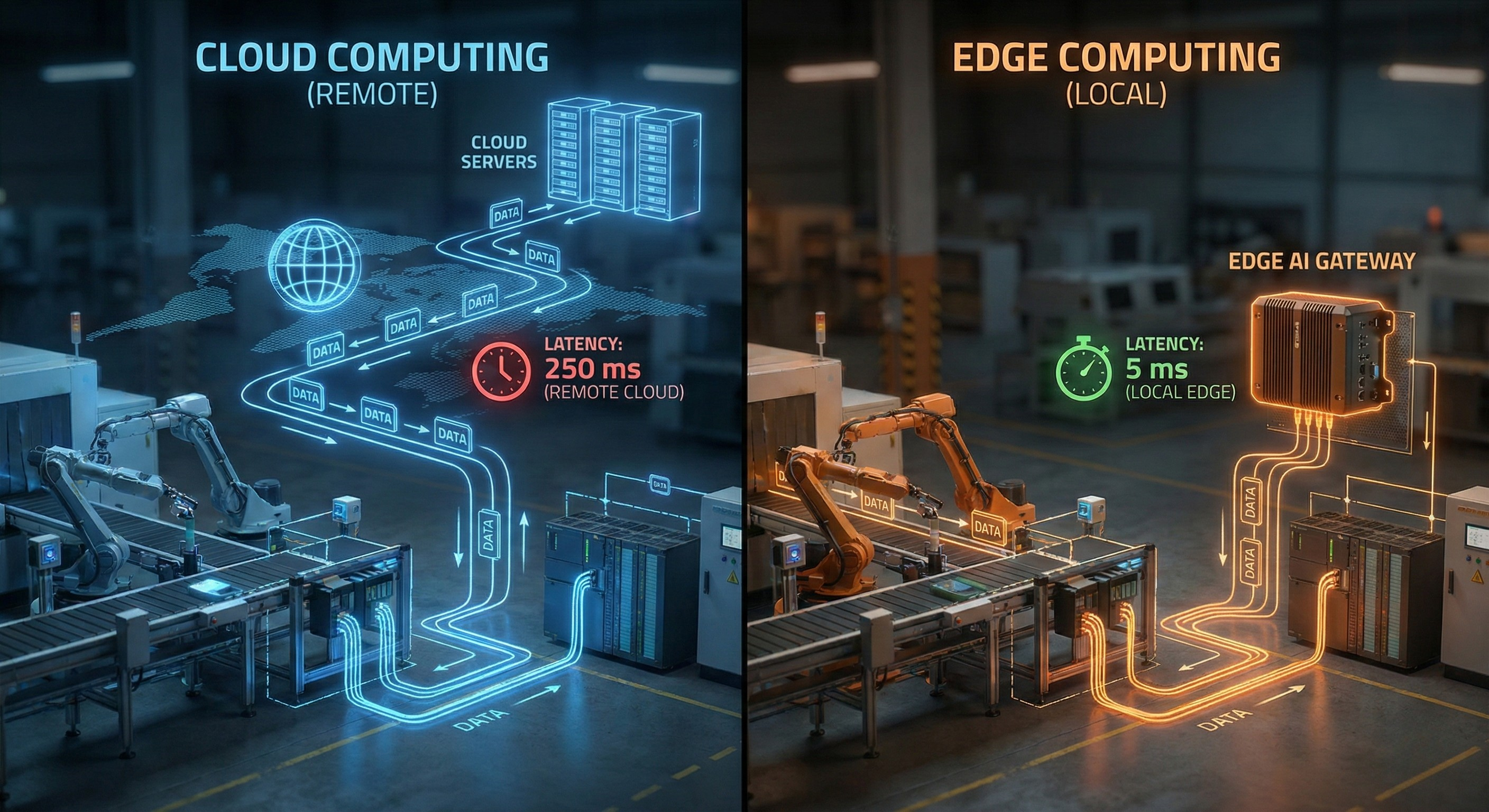

Why cloud-based AI introduces latency

Cloud computing is optimized for scalability, not real-time responsiveness.

Cloud-based industrial AI architectures require multiple sequential steps:

-

Data capture from sensors

-

Data transmission over network

-

Internet routing

-

Cloud ingestion

-

Model inference

-

Response transmission back to machine

Each step introduces latency.

Network transmission is the primary source of latency

Typical network latencies:

-

Local Ethernet: 1 to 5 milliseconds

-

Local edge compute: 1 to 10 milliseconds total latency

-

Cloud round trip: 50 to 300 milliseconds or more

These delays are incompatible with many industrial processes.

Network latency is non-deterministic

Cloud latency fluctuates due to:

-

Network congestion

-

Internet routing variability

-

Cloud resource contention

This variability prevents deterministic execution.

Industrial systems require predictable timing.

Cloud infrastructure cannot guarantee consistent millisecond-level responsiveness.

Data volume compounds latency

Industrial sensors generate high-frequency data streams.

Examples:

-

Vision systems generate multiple frames per second

-

Vibration sensors operate at kilohertz frequencies

-

PLC signals update continuously

Transmitting large volumes of data to the cloud increases transmission delay and processing bottlenecks.

Cloud architectures cannot scale indefinitely for real-time decision making.

How edge computing eliminates latency

Edge computing places computation physically close to machines.

Instead of sending data to distant cloud servers, inference occurs locally.

This eliminates network transmission delays.

Definition: Edge computing in industrial AI

Edge computing refers to executing AI models on local compute infrastructure located near or within industrial equipment.

This infrastructure may include:

-

Industrial PCs

-

Embedded systems

-

AI accelerators

-

Edge servers located within the facility

Edge computing minimizes inference latency

Typical edge inference latency ranges from 1 to 20 milliseconds.

This enables real-time responses aligned with machine operation speed.

Edge computing provides:

-

Immediate data processing

-

Deterministic response times

-

Continuous real-time monitoring

Edge computing enables deterministic execution

Because computation occurs locally:

-

Network variability is eliminated

-

Response timing becomes predictable

-

Systems can operate within strict real-time constraints

This enables reliable machine-speed decision making.

Architecture comparison: cloud vs edge

Cloud architecture

Data flow:

Sensor → Network → Internet → Cloud server → Model inference → Network → Machine

Characteristics:

-

High latency

-

Non-deterministic response times

-

Dependent on network connectivity

-

Optimized for batch processing and analytics

Limitations:

-

Cannot guarantee real-time response

-

Unsuitable for control-critical applications

Edge architecture

Data flow:

Sensor → Local compute → Model inference → Machine

Characteristics:

-

Low latency

-

Deterministic response

-

Independent of internet connectivity

-

Optimized for real-time systems

Advantages:

-

Enables machine-speed decision making

-

Supports continuous real-time control

Why real-time AI requires local execution

Real-time industrial AI requires synchronized interaction with physical processes.

Physical systems operate continuously. Computation must operate at the same speed.

Local execution ensures:

-

Immediate access to sensor data

-

Immediate decision making

-

Immediate execution of corrective actions

This enables closed-loop control systems powered by AI.

Real-time control requires bounded latency

Real-time systems operate under strict timing constraints.

If response latency exceeds the defined window, the system fails to meet real-time requirements.

Cloud systems cannot guarantee bounded latency.

Edge systems can.

How latency prevents autonomous manufacturing

Autonomous manufacturing requires continuous perception, reasoning, and action.

This requires real-time AI execution.

Latency prevents autonomy in several ways.

Latency breaks control loops

Control loops require continuous feedback.

High latency introduces delays that destabilize control systems.

This prevents reliable autonomous operation.

Latency limits predictive capability

Predictions must be generated before failures occur.

If latency delays prediction beyond the failure threshold, predictive AI loses its value.

Latency prevents machine-speed adaptation

Autonomous systems must adapt continuously to changing conditions.

High latency prevents timely adaptation.

Edge computing enables continuous adaptive control.

Why milliseconds matter in industrial AI

Milliseconds determine whether intervention occurs before or after a critical event.

Consider a system operating at 100 cycles per second.

Each cycle lasts 10 milliseconds.

An AI system with 100 milliseconds latency operates 10 cycles behind reality.

This prevents effective control.

An AI system with 5 milliseconds latency operates within the same cycle.

This enables real-time control.

Milliseconds determine whether AI becomes operational infrastructure or merely an analytics tool.

FAQ: Industrial AI latency and edge computing

What is latency in industrial AI?

Latency is the time delay between detecting an event in a machine and the AI system responding to it. In manufacturing, latency is typically measured in milliseconds and directly determines whether AI can prevent defects or failures.

Why is latency important in manufacturing AI?

Manufacturing systems operate continuously at high speed. Decisions must occur within strict timing windows. High latency prevents timely intervention and limits AI effectiveness.

Why does cloud AI introduce latency?

Cloud AI requires transmitting data over networks to remote servers. Network transmission, processing, and response cycles introduce delays ranging from tens to hundreds of milliseconds.

What is edge computing in manufacturing?

Edge computing executes AI models locally near machines. This eliminates network delays and enables real-time decision making.

How much latency does edge computing reduce?

Edge computing typically reduces latency from 50 to 300 milliseconds in cloud systems to 1 to 20 milliseconds in local edge systems.

Why is real-time industrial AI necessary?

Real-time industrial AI enables immediate detection of defects, prediction of failures, and optimization of machine performance. Without real-time response, AI cannot influence physical outcomes.

Can cloud AI support autonomous manufacturing?

Cloud AI alone cannot support autonomous manufacturing due to latency and network variability. Autonomous systems require deterministic, low-latency local execution.

What is deterministic execution?

Deterministic execution means that a system responds within a predictable and guaranteed time window. This is essential for real-time industrial control.

Conclusion

Latency is the defining constraint in industrial AI.

Manufacturing systems operate in continuous real time. AI must operate at the same speed.

Cloud architectures introduce unavoidable latency and non-deterministic response times. These characteristics prevent real-time control and limit AI effectiveness.

Edge computing eliminates network latency by placing computation near machines. This enables deterministic execution, machine-speed decision making, and real-time industrial intelligence.

Industrial AI cannot achieve its full potential without solving the latency problem.

Edge computing provides the architectural foundation required for real-time, autonomous manufacturing systems.